Shared memory documentation

-

“FalconTexturesSharedMemoryArea” is as you say blank, its not until it is activated by adding a line to the FalconBMS.cfg

Set g-bExportRTTTextures 1

once started falcon begins to draw images to this area, and it is this! that drags the FPS down, Not the extractor program its self.

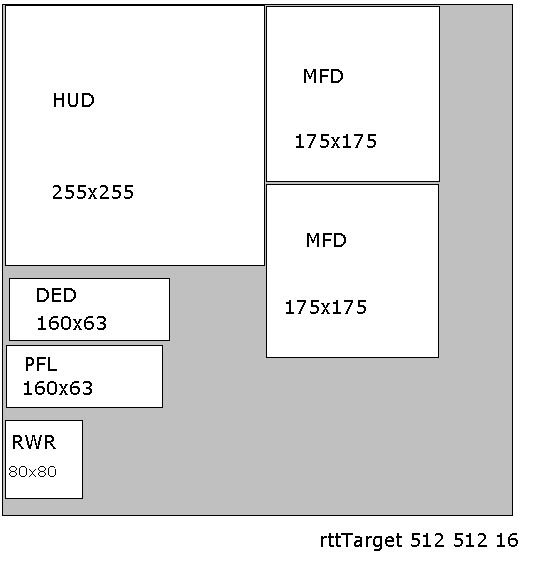

the size of this area and the size and position of the MFD’s, HUD, and some gauges

is set in the 3Dcockpit.dat file.some thing like this (This is from Falcon OF)

MED-extractor and also Jshepard’s program can take a copy from this area and show on another screen.

Lightning’s, Jshepard’s program and falcon BMS, all extract MFD’s in exactly the same way by copying images from this area.

Yes, it copy’s from one desktop to another 2D, and it also copy’s from the SharedMemoryArea to the desktop 3D.

Reboot……

I’m sorry but the FalconTexturesSharedMemoryArea still just contains zeroes for me (the other areas I can read fine.)

Here is my falcon bms.cfg from the config folder : http://gigurra.se/falcon/falcon%20bms.cfg

and here is my 3dcockpit.dat : http://gigurra.se/falcon/3dckpit.datI’ve also tried editing the spelling to

set g_bExportRTTTextures 1

set g_nRTTExportBatchSize n (tried n = 1,2 and 5)I’ve also tried setting (for ALL F-16 3dcockpit files and the root one)

rttTarget 600 600 32;But still, FalconTexturesSharedMemoryArea = 00000…. (4 MB of zeros)

Lightning’s program’s MFDs don’t work for the new bms afaik (They are blank. Other data is read fine)

-

What is this “Yoda crazy shit” at the bottom of your .cfg file ?

-

Just a little info,

the rttTarget (in red) is the size of the SharedMemoryArea

the numbers (in red) are the positioning and size of the left and right MFD’s…… 225x225 pixels

// 3-d cockpit description

// N-pit

// Last change May 17 2007

// by Nanard// Boresight cross height

boresighty 0.75;// Shared render to texture target size. width height depth. depth needs to be 32 for texture alpha to work default is primary

rttTarget 512 512 16;// 3-d coords for Upper left. Upper Right and Lower Left // Rtt Top. Left. Right. Bottom. BlendMode. Alpha

// BlendModes are a = alpha blending. c = color blending. g = texture gouraud. t = texture. default = g

// example hud with 0.5 vertex alpha: hud 20.0 -2.0 0.0 20.0 2.0 0.0 20.0 -2.0 4.0 5 5 260 260 a 0.5;

// first number is toward you / away from you

// second number is left and right + number is right. - number is left

// third number is up / down - number is up. + number is down

// 0. 0 is in the middle of the screen (apprxomiately)hud 20063.0 -2750.0 -618.0 20063.0 2750.0 -618.0 20063.0 -2750.0 4902.0 0 0 280 280 c;

pfl 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 281 0 481 70 c;

ded 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 281 71 481 141 c;

rwr 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 482 0 600 118 c;

mfdleft 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 375 375 600 600 c;

mfdright 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 375 145 600 370 c;

hms 20.063 -4.000 -4.000 20.063 4.000 -4.000 20.063 -4.000 4.000 0 314 286 600 c; -

Just a little info,

the rttTarget (in red) is the size of the SharedMemoryArea

the numbers (in red) are the positioning and size of the left and right MFD’s

// 3-d cockpit description

// N-pit

// Last change May 17 2007

// by Nanard// Boresight cross height

boresighty 0.75;// Shared render to texture target size. width height depth. depth needs to be 32 for texture alpha to work default is primary

rttTarget 512 512 16;// 3-d coords for Upper left. Upper Right and Lower Left // Rtt Top. Left. Right. Bottom. BlendMode. Alpha

// BlendModes are a = alpha blending. c = color blending. g = texture gouraud. t = texture. default = g

// example hud with 0.5 vertex alpha: hud 20.0 -2.0 0.0 20.0 2.0 0.0 20.0 -2.0 4.0 5 5 260 260 a 0.5;

// first number is toward you / away from you

// second number is left and right + number is right. - number is left

// third number is up / down - number is up. + number is down

// 0. 0 is in the middle of the screen (apprxomiately)hud 20063.0 -2750.0 -618.0 20063.0 2750.0 -618.0 20063.0 -2750.0 4902.0 0 0 280 280 c;

pfl 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 281 0 481 70 c;

ded 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 281 71 481 141 c;

rwr 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 482 0 600 118 c;

mfdleft 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 375 375 600 600 c;

mfdright 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 375 145 600 370 c;

hms 20.063 -4.000 -4.000 20.063 4.000 -4.000 20.063 -4.000 4.000 0 314 286 600 c;Yes I know these positions are outside, BUT: I also tried size 600 600 32 but it made no difference whatsoever. (rttTarget 600 600 32);

-

Lightning’s program’s MFDs don’t work for the new bms afaik

That is why I posted Jshepard’s program, now that works fine in BMS.

-

That is why I posted Jshepard’s program, now that works fine in BMS.

Yes but I believe he actually doesn’t use shared memory. He relies on the built in extractor windows being up and running, and then he can use windows APIs to read the pixels of those windows.

Btw here is a sample source code to test shared memory

http://gigurra.se/falcon/src.txt

It reports data in all areas except the texture area. I can also program my own shared memory areas and it would report data there as well. -

I also tried size 600 600 32 but it made no difference

Be careful changing the rttTarget it is very sensitive about size.

-

He relies on the built in extractor windows being up and running,

Now you’ve lost me, what is this “built in extractor windows” .

-

Be careful changing the rttTarget it is very sensitive about size.

Same result when keeping rttTarget 512 512 and limiting the paint areas to <512

-

Now you’ve lost me, what is this “built in extractor windows” .

BMS has a built in mfd extractor. It opens its own mfd windows.

-

Yes but I believe he actually doesn’t use shared memory. He relies on the built in extractor windows being up and running, and then he can use windows APIs to read the pixels of those windows.

I’m still lost

Jshepard’s program is copying images from the “shared memory area”, just like Lightning’s MFD-E. nether of these programs get’s its data from shared memory, because there isn’t any MFD data in shared memory.

-

Falcon MFD-Extractor read-me

http://dl.dropbox.com/u/5918219/Stephen/Falcon%20MFD%20Extractor%20v0.1.10.0%20User%20Manual.docx

http://dl.dropbox.com/u/5918219/Stephen/Falcon_MFD_Extractor_v0.2.0.2_User_Manual.doc

Edit: from Lightning……read-me v0.1.10.0

Enabling 3D Exporting in OpenFalcon

To use Falcon MFD Extractor in 3D Cockpit Mode with OpenFalcon, you need to configure OpenFalcon to export image data from the 3D cockpit to shared memory. This involves editing the FalconBMS.cfg file, located in your OpenFalcon installation folder.

Telling OpenFalcon to export images from the 3D cockpit

To enable 3D Cockpit image exporting from OpenFalcon, you will need to add the following line to the end of your FalconBMS.cfg file:set g_bExportRTTTextures 1

This will tell OpenFalcon to export certain pieces of the 3D cockpit’s sensor/instrument imagery, to a bitmap in shared memory, at the coordinates defined in the 3dckpit.dat file (see the section in this document, entitled Obtaining 3D Cockpit Image Source Coordinates from OpenFalcon’s 3D Cockpit Definition Files, for more details on the 3dckpit.dat file)

Telling OpenFalcon How Often to Update the Exported Image

OpenFalcon has another setting that you can add to your FalconBMS.cfg file, which determines how often OpenFalcon updates the shared memory image. This setting looks like this:set g_nRTTExportBatchSize 5

The g_nRTTExportBatchSize parameter works like this: Suppose we use the symbol n to refer to the value that you’ve assigned to this parameter. OpenFalcon then says, every (2^n) +1 frames – that is, every ((2 to the power of n) +1) frames – I’ll export a new image to shared memory. Let’s look at a couple possible values and their effects:

Parameter Value Effect

set g_nRTTExportBatchSize 1 Shared memory image gets updated every 3 frames

set g_nRTTExportBatchSize 2 Shared memory image gets updated every 5 frames

set g_nRTTExportBatchSize 3 Shared memory image gets updated every 9 frames

set g_nRTTExportBatchSize 4 Shared memory image gets updated every 17 frames

set g_nRTTExportBatchSize 5 Shared memory image gets updated every 33 frames

set g_nRTTExportBatchSize 6 Shared memory image gets updated every 65 frames

set g_nRTTExportBatchSize 7 Shared memory image gets updated every 129 frames

set g_nRTTExportBatchSize 8 Shared memory image gets updated every 257 frames

set g_nRTTExportBatchSize 9 Shared memory image gets updated every 513 frames

set g_nRTTExportBatchSize 15 Shared memory image gets updated every 32769 framesSince exporting these images to shared memory tends to have quite an impact on your in-simulation Frames-Per-Second display rate, OpenFalcon lets you, the user, decide how often you want these shared-memory images to be updated.

Setting the g_nRTTExportBatchSize parameter to low values (resulting in frequent refreshes of the shared memory bitmap) will have definite adverse effects on your in-game FPS rates in Falcon; setting the g_nRTTExportBatchSize parameter to higher values will result in slow output rates. That’s the tradeoff.

If you do not specify a value for this parameter, the default value is :

set g_nRTTExportBatchSize 9PS: I’m not a programer, I’m just one of the Beta Testers that helped Lightning with MFD-Extractor.

Reboot.

-

Thanks for the correction Mark, (very interesting) but is it still not just copying images from the one place to the other, or is it more advanced than that, capturing data before it’s drawn ???… just what GiGurra is asking about.

We’re stepping perilously close to the edge of my knowledge here but I think the point is that the internal display export uses swap chains which avoids having to render to textures held in system memory. The latter is computationally expensive and painful and increasingly poorly supported by the graphics devices (the normal case is to you want zap bits to the card super fast and don’t care about getting bits back to main memory more or less ever so why make that path optimized at all…or some such logic). At any rate, that’s my weak understanding and would go to explaining why the render to texture thing is generally going to be slower in performance terms.

-

……perilously close…knowledge…swap chains…computationally expensive…painful…poorly supported…zap bits…such logic…understanding…texture thing…performance…

So what your saying, its more advanced than just copying an image from A to B

Thanks Mark.

Well…… GiGurra, did you get all of that, if you quickly put together an extractor app then I will volunteer as your beta tester,

what do say to that.

Reboot.

-

In the sense that Phileas Fogg and the space shuttle can both claim to have circumnavigated the earth, yes, different technology same result on your screen…eventually.

-

We’re stepping perilously close to the edge of my knowledge here but I think the point is that the internal display export uses swap chains which avoids having to render to textures held in system memory. The latter is computationally expensive and painful and increasingly poorly supported by the graphics devices (the normal case is to you want zap bits to the card super fast and don’t care about getting bits back to main memory more or less ever so why make that path optimized at all…or some such logic). At any rate, that’s my weak understanding and would go to explaining why the render to texture thing is generally going to be slower in performance terms.

You don’t hold the master texture in SysRAM, you hold it in VRAM. Then you can download a copy to SysRAM.

After each render cycle you download the updated texture from VRAM to SysRAM.Uploading and downloading texture data from VRAM is one of the most basic operations you can do (something I do every day at work and sometimes at home), and does not require much compute power, unless you deal with very large textures (which we don’t). What you download or upload is just a buffer of bytes, which may be stored in what ever system ram area you wish. (Again this is super basic operations and can be done in OpenGL, OpenCL, Direct3D etc, although personally I have not done it in Direct3D, only OpenGL and OpenCL).

For example at work I download a 1080p-texture at 32 bpp each frame from VRAM to SysRAM, from a Dell underclocked GTX 560 ti. This takes me approximately 1-2 millisecond iirc - 500+ fps. (Some implementations are slow though, like sandy bridge integrated gpu we tried, which would’nt get above 19 fps while downloading equivalent data from VRAM). We also shuffle around a lot of textures from different video frame grabbing hardware to video ram and so on.

Downloading and uploading these each frame with today’s PCIE-buses is, when talking about the sizes we are talking about, a non-issue. However drawing multiple windows is a higher cost in many ways, because windows must obey certain drawing rules, which may halt the simulations own rendering while the secondary windows wait for Windows’ (xp or 7 etc) permission to draw. This might be (Im not sure how 7 implements this) even worse if you have those rendered on separate monitors (other timings!). I am fairly confident this is what causes the slowdowns and major stuttering for me and others when using the built in mfd extractor, and why I wish to get a pure mfd frame buffers in system ram instead.

Therefor the cost of downloading these textures from vram->sysram (and letting another application then handle), which is not in any way limited by windows 7/xp, can be much lower than rendering directly in another window.

EDIT: Oops sry I was wrong, the texture at work was 1680x1050, so not quite 1080p ;). Still pretty close.

Here is a comparison of PCIE-speeds to cost of downloading textures:

https://www.benchmarksims.org/forum/showthread.php?10150-Are-there-any-plans-to-expose-mfd-frame-buffers-textures-in-shared-memoryHere is some slightly old test results comparing upload to download (though I would ignore the 480 numbers as they seem to suffer from what is prob some driver issue, the gtx 285 seems OK though - in both cases however the UL speed is 2-5x the DL speed, but even with reduction it’s not a problem

)

)

http://forums.nvidia.com/index.php?showtopic=166757Here is a discussion about even doing it on an iphone ^^:

http://www.imgtec.com/forum/forum_posts.asp?TID=1068One easy mistake to make when doing these things is to implicitly ask the graphics card or CPU to convert image data to fit a slightly different format (you might not even notice you asked for it). It is VERY important that downloads and uploads do not alter the format ;).

-

I’m still lost

Jshepard’s program is copying images from the “shared memory area”, just like Lightning’s MFD-E. nether of these programs get’s its data from shared memory, because there isn’t any MFD data in shared memory.

Sry I think you might be slightly mistaken here. I believe the following ways are used to get MFDs:

Falcon Vanilla/AF:

- Mfds are exported by reading fixed positions in the current d3d framebuffer in 2d views. This can be achieved by methods like hooking the d3d drawing functions, for example by dll injection.

OpenFalcon:

- Mfds are exported directly to system ram, which can be read by any application through using windows memory mapped files/shared memory.

BMS:

- Mfds are NOT written to system ram, but are probably rendered directly from VRAM in other windows with the built-in external instrument tool. JShephard’s application likely simply uses Windows (7/xp) API functions to find the desktop positions of these windows and use functions such as http://msdn.microsoft.com/en-us/library/dd144909(v=vs.85).aspx to read these pixels.

-

You 3dckpt.dat seems strange. Why are you using 512x512x16? The default has 600x600 (if you look, some of the RTT textures are outside of the area you defined). BTW the bpp value is ignored nowadays.

Also, the correct spelling should be

set g_bExportRTTTextures 1

set g_nRTTExportBatchSize 1- your uploaded .dat had g-bExportRTTTextures

the exported surface is (IIRC) an uncompressed 32bpp format and should be complete including the DDS header

-

You 3dckpt.dat seems strange. Why are you using 512x512x16? The default has 600x600 (if you look, some of the RTT textures are outside of the area you defined). BTW the bpp value is ignored nowadays.

Also, the correct spelling should be

set g_bExportRTTTextures 1

set g_nRTTExportBatchSize 1- your uploaded .dat had g-bExportRTTTextures

the exported surface is (IIRC) an uncompressed 32bpp format and should be complete including the DDS header

Answered this previously, sry maybe I should post a correction to my uploaded file: In short I have also tried setting the size to 600x600 and removing the bpp parameter among many other things but still get the same results: The shared textures memory area remains valid but blank.

Update: If you have time to post a working 3dcockpit.dat and config file I could try that? (assuming you know these actually do export shared textures?)

-

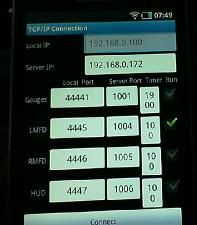

Settings like this should give you a 2d image of your desktop (you don’t have to start falcon to see this).

when using BMS, we don’t need 2D MFD’s so don’t do anything with the coordinates,

This 2D image is just a test to see if its working correct.

PS: If anyone needs help, you must say exactly what you can get working and what you cannot!

Reboot

I can’t get anything to work. There is no installation or application guide, yes I went through the Help.txt there is no guide in there, I installed the apk, I installed the exe fired up BMS in window mode, server running, I am looking at a blank page. How do I set the client, server addresses etc these I don’t know, how do I set up the android application?