What's up with those rumors

-

Support for W7 ending just means we need some guides on how to run BMS under Ubuntu for total newcomers to Linux.

Applying the logic regarding 12 being a higher number than 11, I guess that means Windows 8 is better than Windows 7.

-

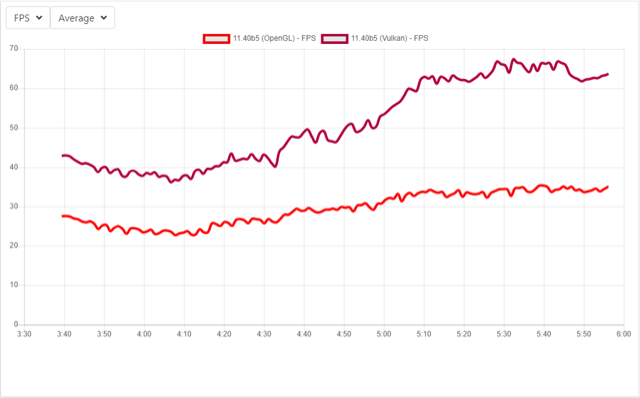

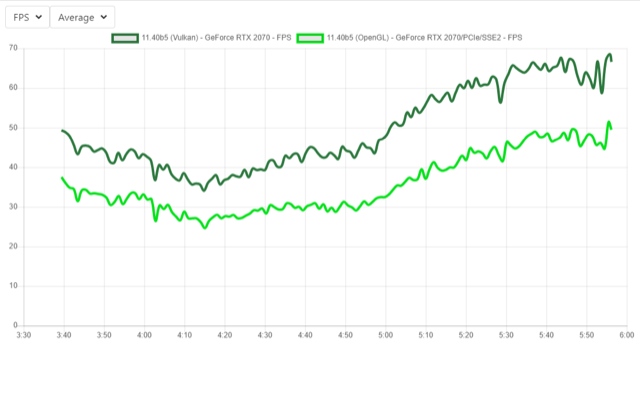

But yeah, as I’ve said… with DX11 as worst (besides unoptimized DX9)… in AVG frames and Vulkan - BEST.

So… What’s wrong with Vulkan? Too fast

– It’s not about the game…doom/arma/bms… but possibility of gfx engine to ‘do the math’ and to do it FAST on gpu, so, about the speed of gfx engine .

– It’s not about the game…doom/arma/bms… but possibility of gfx engine to ‘do the math’ and to do it FAST on gpu, so, about the speed of gfx engine .Same sh** with programming , do the math in VB or C++ … which one compiles/run faster ? You get my drift.

It’s not ‘a blind guess’ of DCS that drives them to same conclusion… fix the graphics, raise enjoyable experience - get playability, … Win win situation. (well… fix bugs, that is)

So , i’m not comparing games , but possibility to run max details on different engines … (I’ll see if I can dig up some bench soft which includes all tech DX9/11/12/Ogl/Vulkan)

…

CheersWell… sure it seems like Vulkan is very good, but what I’m saying is that I don’t really think it has any considerable advantages over DX12 besides the fact that it is multi-platform. Performance wise Vulkan and DX12 looks the same, and I don’t know Vulkan or OpenGL very good but I think I read somewhere that DX has more support and that it might be easier to handle coding wise (But that isn’t confirmed!).

But of course, no doubt, I assume if we could go Vulkan we would have done that instead, but the reality is that it won’t happen (at least not in the close 3-4 weeks upgrade).

Also, one more thing that I already mentioned before - People tend to give too much credit to the API, in my eyes. Eventually shaders are pretty simple programs (For sure compared to C++ heavy OOP style, shaders are mostly procedural style programming, more like simple C than C++), and if written with best optimization possible, the GPU will run them in same/similer speed not really matters the API.

For example:

Even on DX11, I’ve seen the shaders compiling is VERY efficient. Specific example may be that the compiler will remove any crap that isn’t needed at runtime even if you are dumb enough to leave it in the code, I’ve seen it happening. It’s quite nice to see that the compiler is smart enough to figure that if say some variable is eventually not used in say a pixel shader, then it will skip all the instructions involved with that variable without even bothering you. Like saying: “You are a fricking idiot, so I’ll just fix it for you”

I think, that if your engine is efficient enough and you keep the GPU busy close to full utilization, then I honestly don’t know how much a heavy multi-threading API like DX12 and Vulkan will give you more boost than say DX11. I hope you see what I mean.

From my (Relatively short) experience with CPU/GPU I think I can safely state that today’s CPUs are pretty fast so any rendering involved task is done pretty quick by the CPU (I mean specifically to stuff like updating a dynamic vertex buffer or calculating world matrices or doing some other vectors/matrices involved math, and of course updating GPU state and executing commands), so the main problem of inefficiency in my eyes is for engines which aren’t built efficiently from the start --> BMS engine, very good example! Rendering the terrain with ~1000 draw calls just because there are many textures, that is insane! And also running a draw call for EACH “part” of a 3D model, that is also insane! That’s where you start to wear the CPU speed.In simple words:

Make sure you don’t let the GPU to breath, keep it busy by executing HUGE geometry with as much as less draw calls as possible --> Your engine will be fast, even with DX11

-

In simple words:

Make sure you don’t let the GPU to breath, keep it busy by executing HUGE geometry with as much as less draw calls as possible –> Your engine will be fast, even with DX11

Something like this, I guess

-

I-Hawk, your screenshot looks strange, almost completely black. Is it North Korea at night?;)

BTW. what is that tool for measuring performance? Looks like *nix nmon.

-

Nvidia-smi (Or in short nvsmi)

https://developer.nvidia.com/nvidia-system-management-interfaceIf you have an Nvidia GPU then you can run it from command line. By default it should be under your “C:\Program Files\NVIDIA Corporation\NVSMI” path. Just run it with a -l switch for getting a continues read:

nvidia-smi -lWindows task manager isn’t as accurate (At least according to my experience), Process explorer is much better but NVSMI is more dedicated read from Nvidia so I trust it the most.

-

My apologies in advance to you if I ask, I-Hawk, but just for my curiosity only: why, to you, that nVidia tool seems fitting well to their professional video cards only, and just a little to their ‘consumer’ ones, judging from the step of the illustrative notes about the product support?

Thanks a lot and, in case, sorry for bothering.

Wuth best regards.

-

Ha not sure what they mean, possibly to more advanced support. But anyway AFAIK it is a reliable tool for inquiring the GPU utilization, Memory load etc. For me its good enough

-

Jackal

Its just a SMI interface util… nothing special , … all Overclocking Utilities use this kind of approach (even more complicated … direct input to registers) when reading/writing to the gfx card , … also its useful for monitoring applications, since … direct… yes

In simple words:

Make sure you don’t let the GPU to breath, keep it busy by executing HUGE geometry with as much as less draw calls as possible –> Your engine will be fast, even with DX11

nuff said. that’s right ‘au contraire’ what am I seeing now in BMS … Gpu usage ‘jumps’ 0 - 90/100 every 1-3seconds,. while Cpus(threads) are ~<50% , so …(gfx) 'She’s not busy at all , … “when I’ll have sumting to do… I’ll do it … and IDLE”…

…but cpu’s are at 20-50% … doing… God knows what … but not much … SO THAT IS MY PROBLEM … wtf is going on? … (system is more, less optimized, nor cpu/gpu hog themselves)When I re-encode video … I get nice 100% on gpu and/or cpu (or both with OpenCl) … but BMS is working in “spikes” … I would really like just to take a look inside (under the hood) once , maybe I can break something

Any ideas?Don’t get me wrong… I would gladly see BMS in DX12 (even slower DX11) then in DX9 , sheesh, we all … (but if I could choose , would rather try vulkan approach… I’m not pushing it , just saying)

Cheers

-

Jackal

Its just a SMI interface util… nothing special , … all Overclocking Utilities use this kind of approach (even more complicated … direct input to registers) when reading/writing to the gfx card , … also its useful for monitoring applications, since … direct… yes

nuff said. that’s right ‘au contraire’ what am I seeing now in BMS … Gpu usage ‘jumps’ 0 - 90/100 every 1-3seconds,. while Cpus(threads) are ~<50% , so …(gfx) 'She’s not busy at all , … “when I’ll have sumting to do… I’ll do it … and IDLE”…

…but cpu’s are at 20-50% … doing… God knows what … but not much … SO THAT IS MY PROBLEM … wtf is going on? … (system is more, less optimized, nor cpu/gpu hog themselves)When I re-encode video … I get nice 100% on gpu and/or cpu (or both with OpenCl) … but BMS is working in “spikes” … I would really like just to take a look inside (under the hood) once , maybe I can break something

Any ideas?Don’t get me wrong… I would gladly see BMS in DX12 (even slower DX11) then in DX9 , sheesh, we all … (but if I could choose , would rather try vulkan approach… I’m not pushing it , just saying)

Cheers

BMS engine currently is… as I explained here:

https://www.benchmarksims.org/forum/showthread.php?31216-AMD-RyZen-Build&p=513824&viewfull=1#post513824 -

We are dealing with a 20+ year old game engine. The first priority is to clean up that engine and prepare for what current (possible future) technology will run BMS. I believe the DEV’s are already hard at work on this. That in itself will take some time. Optimize as much that can be optimized. Utilized multi core technology in this optimization. In other words, make sure the GFX language (API’s, ect.) run through a dedicated core. I for see the need for multi core CPU’s on the horizon for BMS. I think most (if not all) VP’s are using multi core CPU’s anyway. I-Hawk is 100% correct when assuming that BMS needs to shunt the GFX information through to the GFX card to keep the render sequence “full” so to speak. That will allow for much greater overhead room.

DX 11 verses DX 12. The final conclusion is that DX 12 has more tools to utilize than DX 11. DX 11.2 or 11.3+ has “Tiled Resources” (useful for terrain textures using less memory to render) , “Consertative Rasterizations” (collision detection), “Default Texture Mapping” (reduces copying images from CPU to GPU) and yet more tools from there…

https://docs.microsoft.com/en-us/windows/win32/direct3d11/direct3d-11-3-features

Those tools are not available in DX 11. Only DX 11.3+. So if your going to want those tools, you are looking at Win 8 or 10.

DX 12 adds more to the list from above. Also, there appears to be tools for Win7 and DX 12 from Microsucks. So if more rendering tools will make a difference in the models and terrain, then DX 12 would be the way to go….

https://docs.microsoft.com/en-us/windows/win32/direct3d12/new-releases

Either way, the more tools that are available to the DEV’s the better IMO.

-

BMS engine currently is… as I explained here:

https://www.benchmarksims.org/forum/showthread.php?31216-AMD-RyZen-Build&p=513824&viewfull=1#post513824uupss… tldr , but yeah… exactly, Thanks

-

Hmmm xplane just released 2 screenshots showcasing the Vulkan fps gain which is enormous. Either current is bad written or Vulcan works pretty well after all.

https://www.facebook.com/339202526282/posts/10156408859841283/

Sent from my SAMSUNG-SM-T818A using Tapatalk

-

Dear I-Hawk, jhook and white_fang…

thanks a lot, as first, for your patience with me and having been so clear and simple only to make me uderstand more about on a subject so specialized.

Well, you succeeded and were great in that also!

This said… all must come to a practical conclusion, IMHO.And it is… tadaaa, “Let’s wait for three of four weeks!” :lol:

With best regards to all.

-

Dear I-Hawk, jhook and white_fang…

thanks a lot, as first, for your patience with me and having been so clear and simple only to make me uderstand more about on a subject so specialized.

Well, you succeeded and were great in that also!

This said… all must come to a practical conclusion, IMHO.And it is… tadaaa, “Let’s wait for three of four weeks!” :lol:

With best regards to all.

There’s no way around that one… :munch:

It is much more complicated than what we are discussing, but the base principle is the same. I am happy with what I have now, and I will be happy with whatever the team decides. One thing to note is that work would need to get started as soon as possible (or perhaps it already has). The reason for this is because the code structure needs to be done before any asset building is created. You can not just build all the beautiful models and expect them to drop right in. The engine code is first. Optimize as much as possible. Asses API’s and how they will be computed. Build and test assets. Sounds simple but it is actually a very long process. So, 3 to 4 Falcon weeks. Minimum.

-

Good to know things are still happening.

-

Whoooooooooo! Am I seeing right??

-

Hmmm xplane just released 2 screenshots showcasing the Vulkan fps gain which is enormous. Either current is bad written or Vulcan works pretty well after all.

https://www.facebook.com/339202526282/posts/10156408859841283/

Sent from my SAMSUNG-SM-T818A using Tapatalk

I’m not on FB so I’d appreciate it if you could post those screenies here, thanks Arty.

I have yet to come across a privately owned gaming system that xp11 would not bring down to its knees no matter how good the hardware is, so this would mean an enormous improvement.

They’d naturally tend towards a cross platform API as XP supports all 3 major players: win, mac & thankfully Linux as well.

All the best, Uwe

-

-

ευχαριστουμε πολυ, Αrty!

That’s impressive-looking indeed. I hope they’ll bless XP11 with vulkan support as well and don’t “save” it as a feature for XP12 (though I’ll certainly upgrade to that as well, no questions asked).

All the best,

Uwe

-

Whoooooooooo! Am I seeing right??

Lol, STILL a lot of the same peeps around too. How is the drama these days?